There’s a lot to unpack on those questions. Let’s go.

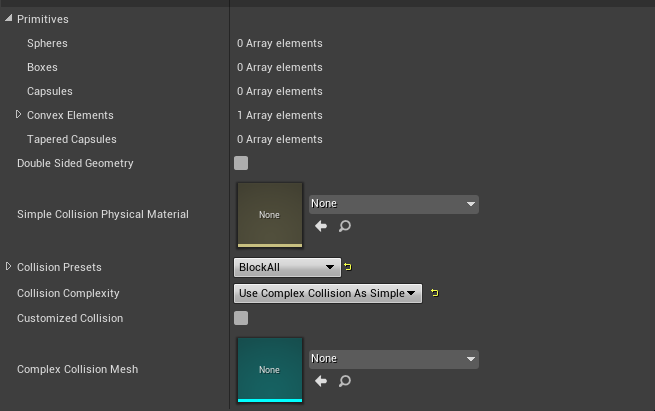

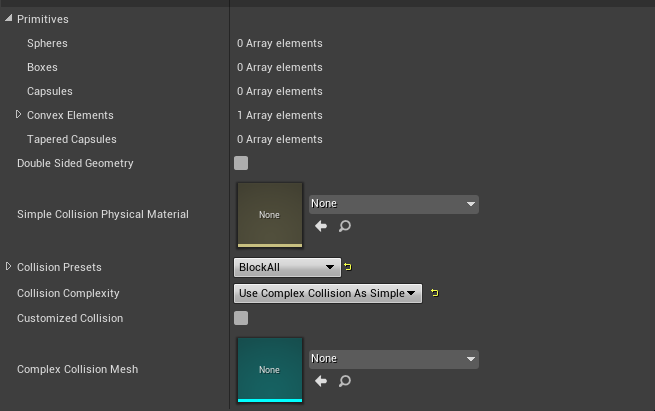

Static Meshes in Unreal have the Collision Data saved into them and it’s processed at import time.

If an FBX Imported Static Mesh doesn’t have a user setup object prefixed with UBX_, UCP_, USP_, UCX_ (Box, Capsule, Sphere, Convex, respectively) mesh with the same name as each visual mesh (e.g. Cube_001 & UBX_Cube_001). It can be generated automatically by Unreal at Import time based on the Complex Static Mesh.

This will generate a simplified Convex.

more detailed info here:

This usually doesn’t give enough detail for most situations so you can either generate your own mesh in your DCC or use the more expensive scenario which is setting

This will certainly be super complex and will work well for projectiles and finer simulation but it’ll be more expensive.

I suppose the Generated mesh Operator (like FCube) could have an option to add one of the types of collision to the array based on the entities bounding box (Box, Sphere, Capsule or Convex) depending on the type of generated mesh would be good. Convex being used for more complex primitives (Such as cylinders).

However, when procedurally generating complex meshes (like trees for instance) it would be nice if to have a Object Collision Operator, that could replace the Convex Object for a generated one. That way you could procedurally generate a simpler collision for your mesh following your own ruleset.

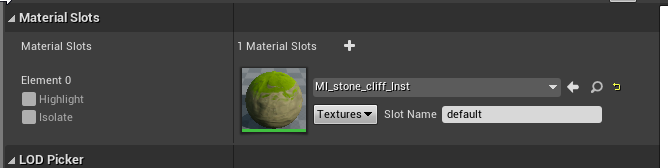

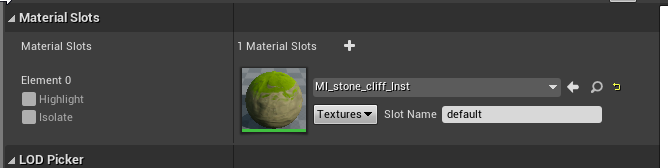

About Materials

I find the assignment of material using the Vertex Color a little strange, because you end up applying it to the whole object. Unless I’m missing something, you can’t paint individual vertices. which is the point of vertex colors.

The Vertex Colors are normally used to blend between materials, or to spawn meshes, foliage or actors on the surface of other actors based on the vertex weight, or all sorts of uses.

I also found strange that you could “Paint” a Generated mesh, but not assign a material to a placed one.

What would make more sense (in my head at least) would be to assign a Material to any object (not just Generated Meshes), So the resolve node would work exactly the same.

but the Material Node would take the ID input, and Push the Resolved Material to a Material Slot

Objects can have any number (don’t know what the limit is) of Material Slots

Most meshes only have slot 0.

So to answer your questions.

1 - Assigning a collision channel instead of rendering would be a bit weird (Generated meshes should have the option to have collision or not)

2 - Having Material Or collision would be very weird, as a user, you can either paint or collide doesn’t sound right. You need both.

3 - I don’t think Generating a Mesh twice is necessarily bad. I don’t know about runtime performance implications, but it could be as simple as taking the generated object output and passing it into an Add Collision node, which would work really well for offline generated content.